Big-O notation

Used to describe the time or space complexity of algorithms. Big-O is used to express upper bound of an algorithm’s time or space complexity, maximum possible resources needed. “How code slows as data grows”

- Only no. of steps to completion

- All smaller operations are ignored

- n = amount of data

Linear Time O(n) example

def addUp(n):

sum = 0

for i in range(n):

sum += i

return sumIf n = 1000 The for loop would loop 1000 times (steps) As n increases the no. of steps also increase in a linear way

Constant Time O(1) example

def addUp(n):

sum = n * (n + 1) / 2

return sumIf n = 1000 or n = 100000 The no. of steps is the same, it does not increase or decrease. It is constant

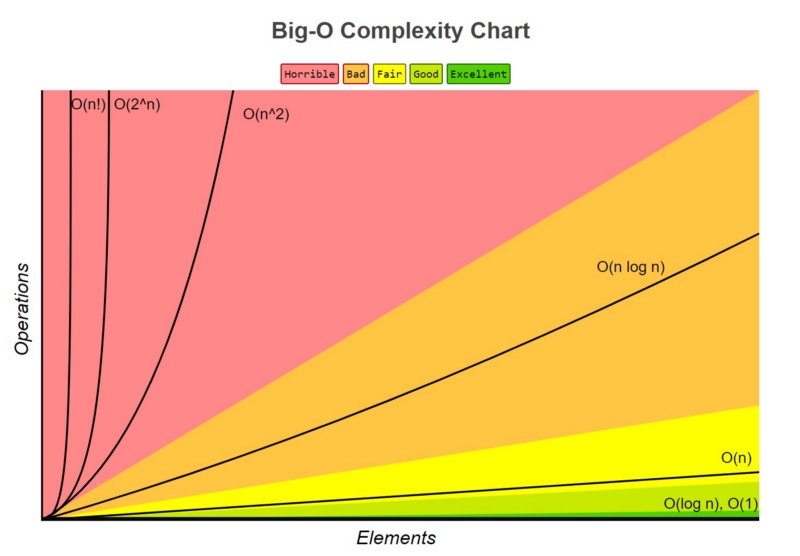

Best to Worst

Constant time = O(1)

Logarithmic time = O(log n)

- Binary Search

Linear time = O(n)

- Looping through an array

- Search through linked list

Quasilinear time = O(n log n)

Similar to linear time but with large datasets, it gets progressively worse

- Quicksort

- Mergesort

- Heapsort

Quadratic time = O(n²)

- Insertion sort

- Selection sort

- Bubble sort

Factorial time = O(n!)

- Travelling salesman problem